In a world where everything seems to be going digital, the word itself has become almost invisible — something we take for granted. We use it to describe our photos, our music, our communication, and even our identities. But what does it actually mean to be digital?

To understand this, let’s take a step back in time — to the era of the telegraph, and to one of the earliest examples of digital communication: Morse code.

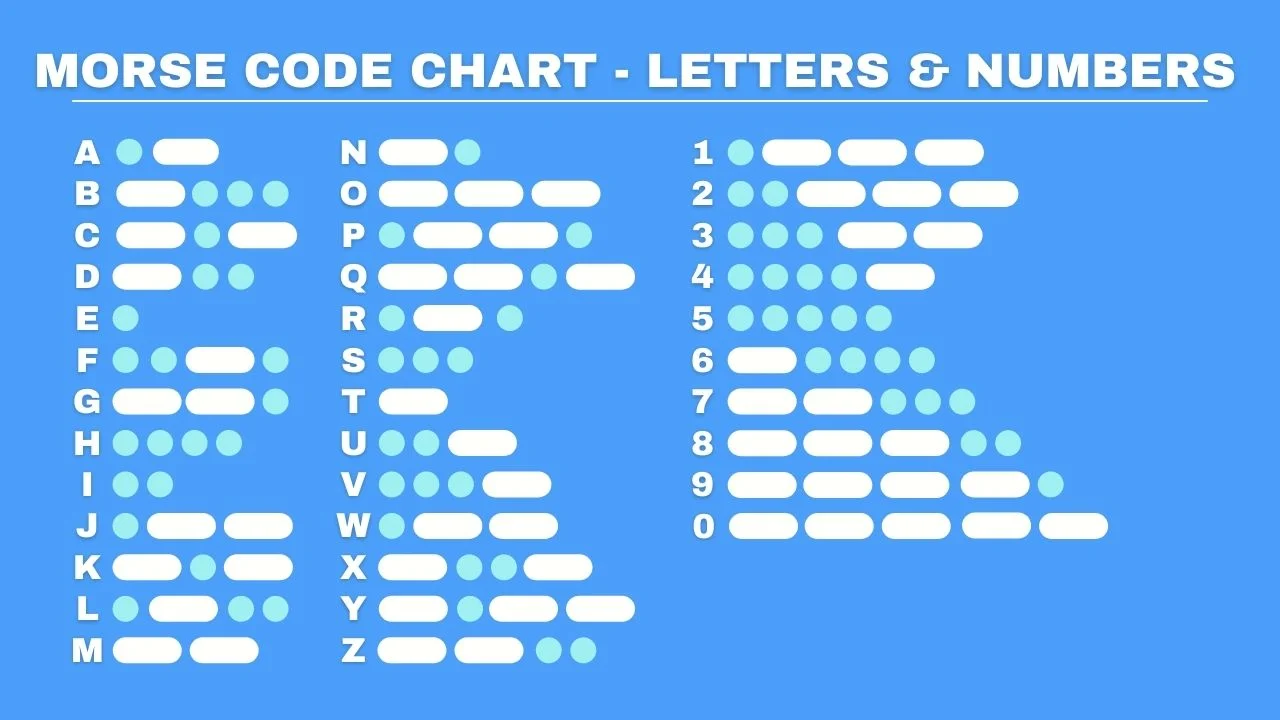

Morse Code: The Original Digital Language

Before computers, before smartphones, before fiber optics — there was the telegraph. Invented in the 19th century, it allowed people to send messages across great distances using electrical pulses along a wire. These pulses weren’t continuous sound waves or analog signals. They were discrete, clearly defined events: a dot (short pulse) or a dash (long pulse).

If you think about it, Morse code was built on a simple, binary principle:

-

Either a signal is being sent (something)

-

Or it isn’t (nothing)

Those dots and dashes, strung together in precise patterns, represented letters, numbers, and punctuation. The system didn’t depend on the shape or quality of the signal — only on whether it existed, how long it lasted, and how it was spaced. That’s exactly what digital means.

The Heart of Digital: Discreteness

The word “digital” comes from “digit” — as in fingers, or more broadly, countable units. To be digital is to be discrete, not continuous.

Analog signals, like sound waves or handwriting, can vary infinitely — every curve and shade has meaning. But a digital system doesn’t care about infinite variations; it only cares about distinct states.

Morse code had two:

-

Dot (short signal)

-

Dash (long signal)

Modern computers use two as well:

-

0 (off)

-

1 (on)

Everything that happens inside your phone, your laptop, or a streaming service boils down to these two states — just like Morse code. Every pixel of a photo, every beat of a song, every word you type — all of it is encoded as a sequence of discrete values that can be transmitted, copied, and stored with perfect accuracy.

Why Digital Matters

Analog information can degrade over time — a photocopy of a photocopy blurs, a cassette tape hisses. But digital data doesn’t fade the same way. Because it’s represented in discrete steps (0s and 1s), as long as you can distinguish one from the other, the original message can be perfectly reconstructed.

That’s why digital systems are so powerful:

They let us preserve, transmit, and reproduce information without loss.

In essence, being digital means turning the continuous into the countable — converting reality, which is full of infinite variation, into a set of clearly defined symbols that machines (and humans) can handle reliably.

The Takeaway?

To be digital is to break information into discrete symbols that can be transmitted, stored, and reconstructed without losing meaning.

Morse code showed us how: by reducing language to dots and dashes.

Computers do the same: by reducing everything to zeros and ones.

The essence of “digital” isn’t about technology — it’s about clarity, precision, and reproducibility. It’s about creating meaning out of patterns that are simple enough to be perfect.

This article was written with the assistance of OpenAI’s GPT-5