How Neural Networks Work: A Simple Look

When people hear the term neural network, it often brings to mind the brain—billions of neurons firing together to create thoughts, memories, and decisions. Artificial neural networks, the kind used in AI, borrow the metaphor but are far simpler and much more rigid. In this post, we will explore how they work in plain terms, and what we gain (and lose) by building them digitally instead of biologically.

The Digital Version

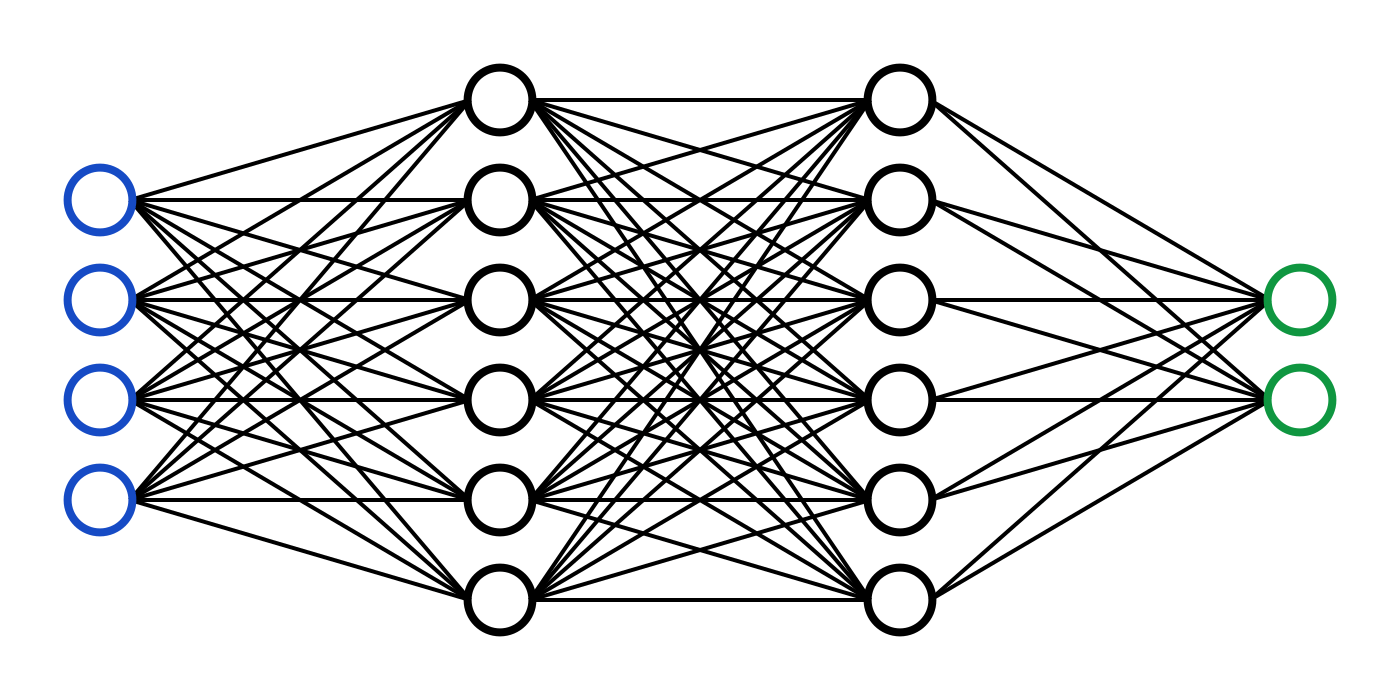

In a computer, a neural network is basically a system of math functions connected together. Imagine a chain of calculators stacked in layers. Each calculator takes numbers in, does some simple math, and passes numbers forward. By stacking enough layers and adjusting the math at each step, the network learns to recognize patterns—whether it’s spotting a cat in a photo or predicting tomorrow’s weather.

Between the input (what you feed in) and the output (the final answer) lie hidden layers. Each one transforms the data a little bit, teasing out features that aren’t obvious at the start. For example, in an image: the first hidden layer might pick up on edges, the next on shapes, and later ones on whole objects. The deeper the network, the more abstract the features it can recognize.

The learning comes from trial and error. The network makes a guess, checks how wrong it was, and then tweaks its internal math slightly. After repeating this process millions of times, it gets pretty good at its task.

The Natural Version

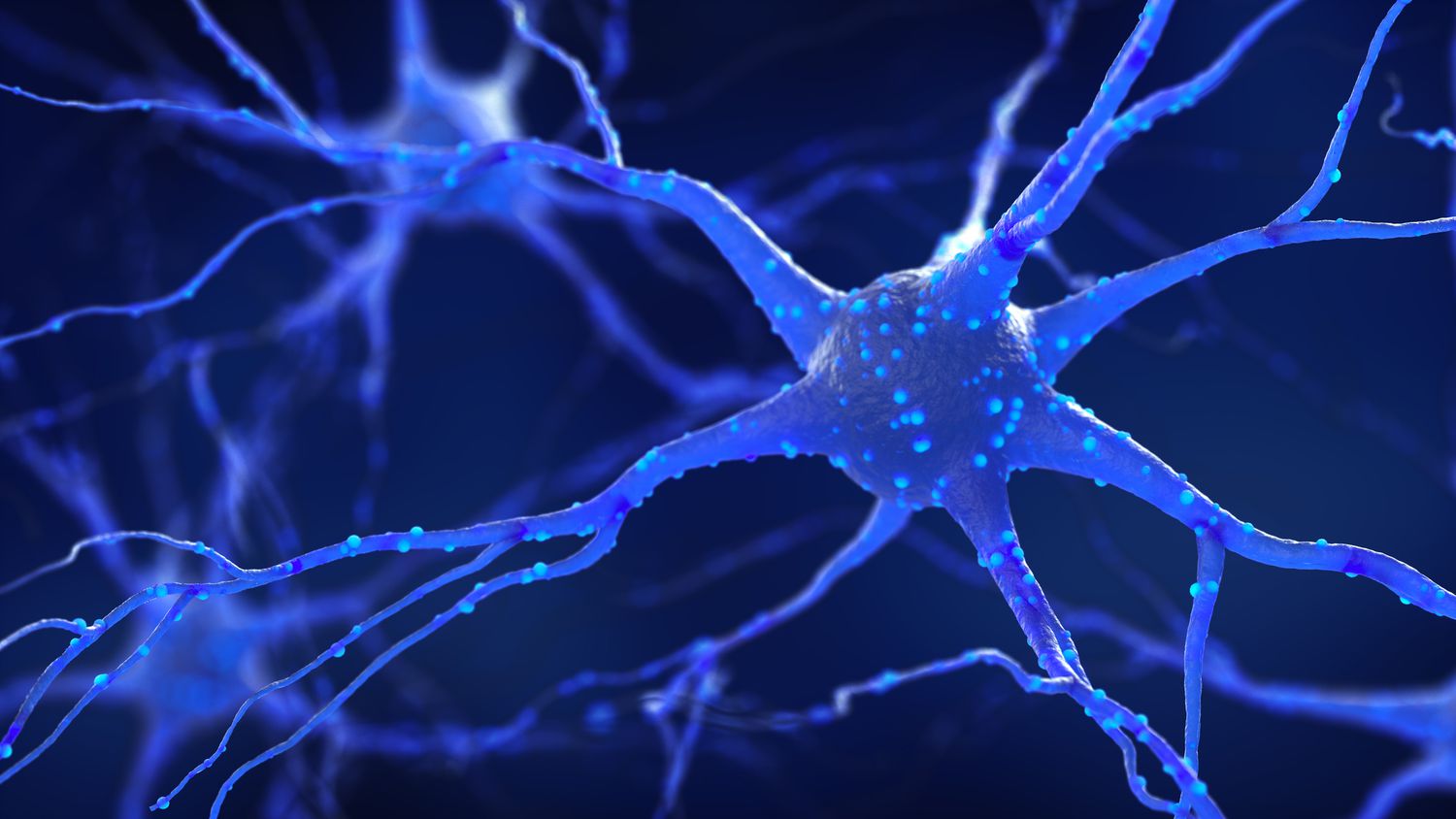

In the human brain, neurons are far more complex. A single neuron doesn’t just add and multiply numbers—it receives chemical signals, modulates them in nonlinear ways, and passes information along through spikes of electricity. Unlike computers, the brain is constantly rewiring itself, forming and pruning connections based on experience, sleep, and even emotion. It’s messy, adaptive, and astonishingly energy-efficient.

What makes the brain especially remarkable is how seamlessly it blends different kinds of information. Neurons don’t work in isolation; they fire in patterns across vast networks, integrating sensory input, memories, and context all at once. This allows humans to generalize from just a handful of experiences, improvise when faced with new situations, and even attach meaning or emotion to raw data—abilities that today’s digital neural networks can only approximate in narrow ways.

What is Gained and Lost

Artificial neural networks aren’t miniature brains—they’re simplified echoes of one small part of how brains work. What they gain in translation is speed and scale: digital networks can crunch vast amounts of data at rates no human could match. They’re also consistent, never tiring or getting distracted, and they specialize beautifully in narrow tasks like language translation or medical image analysis. These strengths make them powerful tools, even if their workings are more mechanical than organic.

But something is lost in this digital simplification. Neural networks are far less flexible than the human mind; a child can understand the idea of a “dog” after a single encounter, while a machine often needs thousands of labeled examples. They also lack creativity and adaptability, sticking closely to patterns they’ve already seen. And unlike the human brain, which draws on chemistry, timing, and emotion to create rich meaning, artificial networks reduce everything to numbers and equations. In this sense, they’re not replicas of intelligence, but carefully engineered tools: sharp, efficient, and limited—brilliant assistants shaped by us, but not replacements for the vast, mysterious power of the human mind.

This article was written with the assistance of OpenAI’s GPT-5